How we communicate with machines

Research on human-machine-interaction has advanced significantly in recent years according to computer scientist David Ahlström. He spoke to us about stumbling blocks and future potential for improvement.

David Ahlström, I am perfectly satisfied with the operation of my technological devices – smart phone, tablet computer, laptop – and cannot imagine what else might need to be done here. Is there any work left to do in your field of research?

Ahlström (laughs): That is a question I frequently put to my students. Why do we need the research area of human-machine-interaction, if it is already running so smoothly? But we must bear in mind that it was a long and arduous path to get where we are today. For the everyday user, many things appear to work easily and well. In the sphere of research, we are still aware of several stumbling blocks, which we must overcome. These are niche topics in many areas, but nonetheless addressing them may prove beneficial for many people.

Which directions are you thinking of?

The basic idea is this: The interaction between human and machine should be as seamless as possible. The device should understand the human. Going forward, it should become less and less necessary for the human to adapt to the technical demands of the machine.

These are extraordinary words from a computer scientist, who might be expected to be concerned primarily with technology. Where does this perspective come from?

I wasn’t particularly interested in computers, even back when I decided to study computer science. To be honest, I am still not very interested in them today. I regard them as tools that need to function. That is what I expect from them. Originally, I wanted to teach “technical crafts”, but when it was time to take the entrance examination at the arts university in my native country of Sweden, I decided to opt for the joint trip that marked the conclusion of my military service instead. And that is how I landed in computer science, temporarily at first, but I ended up staying. The interaction between human and machine is closely linked to visual communication. My artistic-cultural interests offered quite a good fit with the research field I later settled on.

Where do you still see stumbling blocks when it comes to using everyday devices?

Imagine, if you will, that you are walking along a street, with a child or a shopping bag in your arms, and with a watchful eye on the traffic situation, considering your next steps, and other pedestrians. Your smart phone rings. In this situation it would be helpful if you could answer the call without having to glance at your mobile phone. In other words, it would be great if you could simply swipe randomly across the screen to pick up the call. We could take this idea even further and claim that it should be sufficient to carry out a specific gesture in the vicinity of the smart phone, which would then trigger an appropriate reaction by the device. There are many areas where using gestures for control purposes might be useful.

What would this require?

Different sensors would need to be built into the device, for instance cameras capable of object recognition. Equipped with these, the device would be able to recognize my hands and the gestures I perform. The device would even be able to recognize which objects I, the user, am touching.

What could such a function be used for?

Let me give you an example: I recently moved into an older apartment, where the light switches and the electrical sockets are located in completely unfavourable positions. In the future, light switches will be replaced by a smart solution. This could involve small 3D strips with a certain pattern printed onto them. These are simply affixed to the walls in spots where I could really use a light switch. If I stroke my finger over such a 3D strip, the control unit recognizes that I wish to turn on the light. The device also knows that I would like to have the light dimmed to a certain brightness level. However, if my girlfriend strokes her finger across the 3D strip, the device knows her light preferences and switches the lights on with a different brightness level.

How does this work?

Certain sensors are required when conducting research in this area. We work with acceleration sensors, which can also be used to measure vibrations. If you were to tap your fingers on a table surface next to a mobile phone, for instance, the device would be able to detect the vibrations. The same applies when I run my hands over certain surfaces. When considering these developments, we must always ask ourselves why we want to build these applications: What is technically feasible, but at the same time: In terms of the human, what is possible, what is feasible, what is desirable, and what is practicable?

Are there any areas where it is not the technical possibilities that are driving the supply, but rather the needs of humankind?

Yes, this is especially true in the area of visual communication. When it comes to deciding what to show in which way on a display and which colours, symbols and contrasts to pick, the human usually serves as the starting point of the development process. A well-designed screen must clearly and unambiguously express what the machine requires from the human. This necessitates close collaboration between designers and future users, as well as numerous studies, where future users can test and evaluate different design proposals.

A large manufacturer of smart phones recently announced its intention to bring the holograms familiar to us from science fiction films to its mobile devices. Do you think this is realistic?

As far as these developments are concerned, we must ask ourselves: Do we want this? Why do we want it? It would make it easier, for example, to hold a meeting with people who are physically located on the other side of the world. If a room has been equipped with cameras on the walls or on the ceiling, these cameras are already capable of measuring with absolute precision where my finger is moving and which commands I am issuing. I think it will be somewhat more difficult to offer this functionality on mobile devices. In any case, the area of application is rather narrow: Do I really want to chat to my hologram mother in public? This issue is similar to voice control, which needs a certain – usually quiet, private – environment to be truly useful.

for ad astra: Romy Müller

translation: Karen Meehan

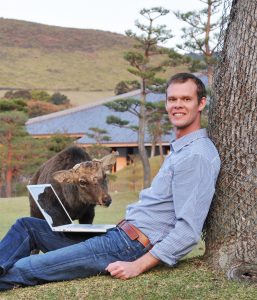

About the person

David Ahlström is associated professor at the Department of Informatics Systems. He read computer science at the University in Stockholm, Sweden. After completing an ERASMUS exchange year at the University of Vienna and concluding his studies, he worked for Siemens in Vienna for two years, after which he came to Klagenfurt. In 2008 he received an eighteen-month Erwin-Schrödinger-scholarship from the Austrian Science Fund and joined the Computer Science and Software Engineering Department at the University of Canterbury in Christchurch, New Zealand. He completed his postdoctoral qualification on the topic of human-machine-interaction in 2015. Ahlström has recently spent some time teaching and researching at the University of Manitoba (Canada). His current research is focused on graphical user interfaces, interaction mechanisms, interactive systems, graphics and design, and interaction design.