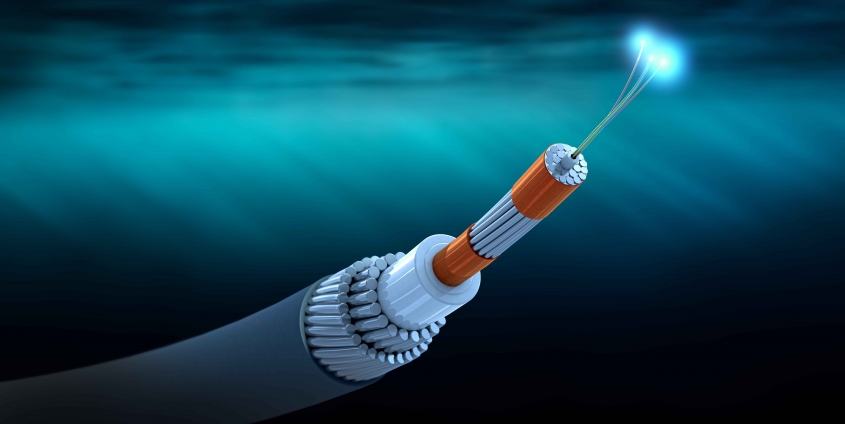

Data pipelines: New measures to tackle the last few congested metres

Today’s data networks are well developed: Even so, although the data can pass through the pipelines smoothly and largely unhindered, the last few metres of the pipeline represent a bottleneck. Firewalls, security and the restrictions imposed by the processing software all tend to slow down processing. Now, thanks to a new H2020 project, a research team at the Department of Information Technology, led by Radu Prodan, has started to work on new measures aimed at tackling the last few congested metres.

“We are approaching the issue on several levels with our project”, Radu Prodan tells us. He leads the project at the University of Klagenfurt. The first level is the so-called dark data. Prodan explains: “Much more data is generated than is actually used. The industry estimates that around 80 percent of the data is of no value and preserving it introduces more risks to companies than benefits. If process mining can be used to identify the structure of the data, assign it to the relevant processes and discard meaningless data, the overall process can be made more efficient and secure. This, Prodan argues, requires rethinking the complete software stack, starting with new domain-specific programming languages.

“Programming languages are a delicate issue in computer science. A great deal of software is still programmed using old (and often outdated) languages. Yet the requirements are becoming ever more diverse, so it is not reasonable to assume that a single language can be used successfully for everything”, Prodan continues. Accordingly, the research team will propose different languages for each of the steps in the big data workflow processing pipeline.

In a third step, the researchers will eventually simulate how the new technology works. A simulator will re-enact the pipelines as accurately as possible to determine how the system performs in real world conditions. To this end, the research team is guided by five application cases that are part of the project: Two companies are active in Industry 4.0, one example is concerned with multimedia sports broadcasting, one with digital marketing, and one case study will feature patient eHealth data management.

These different fields of application have one thing in common: They are keen to solve data processing issues quickly and efficiently. Here, the idea of the cloud continuum proves helpful. Radu Prodan explains: “We all know and use cloud computing today, for example the cloud services provided by Google, Amazon, Microsoft, etc. This involves the data being stored and processed centrally raising security and privacy concerns. By contrast, the cloud continuum concept is based on the assumption that we carry small mini-clouds around with us in the form of our smartphones or other terminal devices. These resources are used to ensure that data stays in the hands of its owner and the processing is confidential, reliable and democratically organised by means of blockchains. In other words, we are proposing a democratic system at the level of resources.”

The project bears the title “DataCloud: Enabling the Big Data Pipeline Lifecycle on the Computing Continuum” was written during the lockdown period and was approved with maximum points (15 out of 15) by EU agency Horizon2020 from a total of 96 submitted proposals. The acceptance rate was 5.2%. The research team is expected to work on it for the next three years. The project has a total budget of 5 million Euro. The coordinator is SINTEF AS (Norwegian independent research institute) and has three university partners (Sapienza University of Rome, University of Klagenfurt and Royal Institute of Technology) and seven industrial partners: iExec Blockchain Tech SAS (France), The Ubiquitous Technologies Company (Greece), JOT Internet Media (Spain), MOG Technologies SA (Portugal), Ceramica Catalano SRL (Italy), Tellu IOT AS (Norway) and Robert Bosch GmbH (Germany).

Foto: Christoph Burgstedt/Adobestock.com

Foto: Christoph Burgstedt/Adobestock.com